Incomplete Worlds

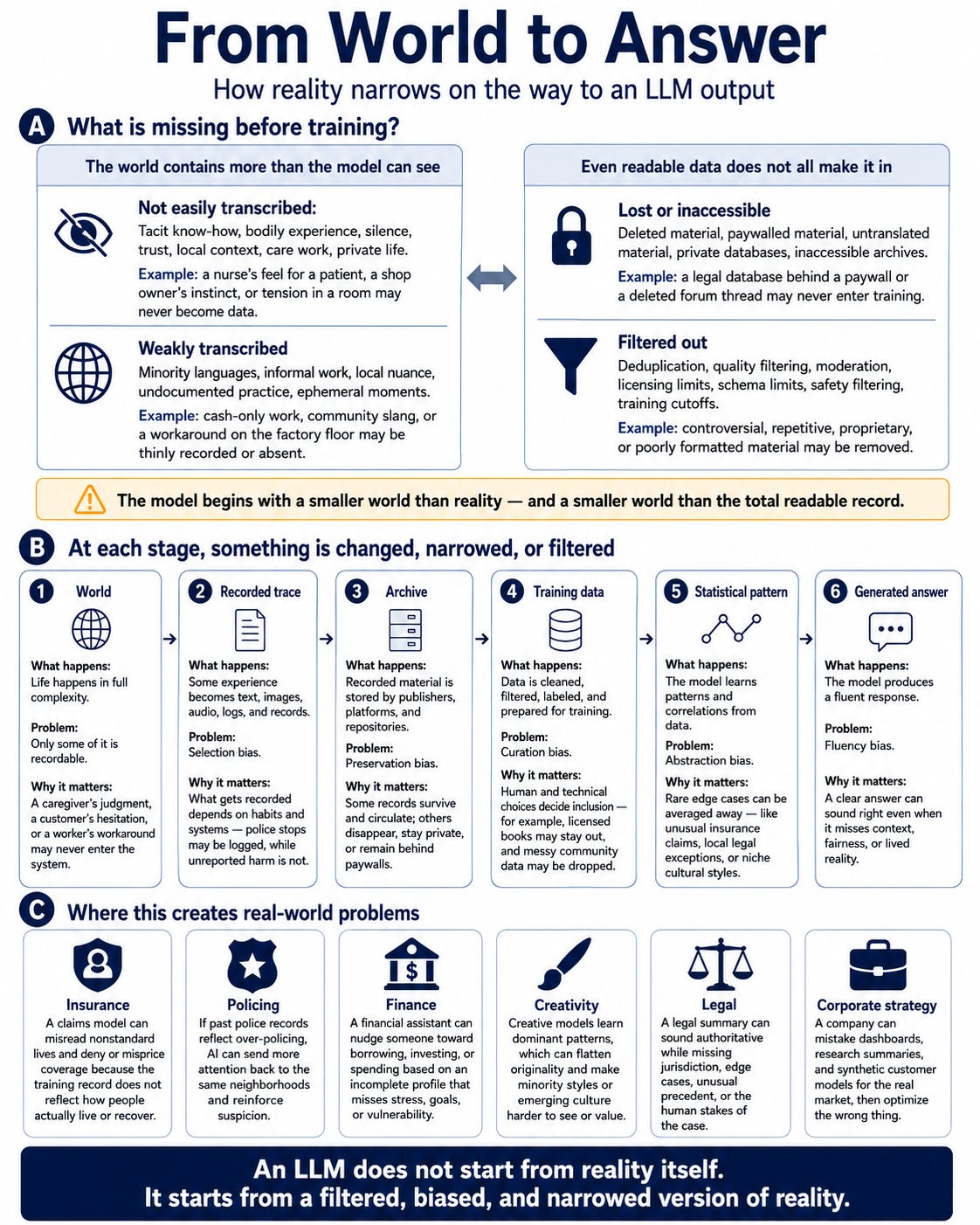

LLMs do not see the world and that is both a problem and a human opportunity

It’s interesting and concerning to know that every time you use an LLM, you are only seeing a fraction of the world. It’s like using a 50mm lens on your camera; to see the full picture, you need a fisheye.

The problem is that LLMs are so darn convincing and reassuring that we somehow get tricked into believing that everything they spit out is complete and accurate.

The thing is, LLMs only see part of the world.

So what’s claimed as a picture of reality is far from it, and this presents several problems for those treating it as gospel.

To dive further into this, I took a look at Block, which recently got rid of 40% of its workers and replaced them with two AI Worlds and leaner teams.

One Block World provides a corporate view; it is a repository for everything the company does.

The other is the consumer view of the world, all the data Block holds on its customers.

In this piece, I put the reader in the shoes of someone using a model to make decisions at Block to illustrate the blind spots of such an approach, and an essay where I go deep into what’s happening, what the results have been (very good to date for the company, the problems other companies have experienced from relying too heavily on AI models and some organizational and personal solves to help prevent trouble.

In short, it’s about ensuring people use their judgment when using AI, and that this behavior is built into protocols/training, and that there’s a recognition that the AI model’s view of the world is not the real world, and that human work needs to be done to close the gap between the two.